The Agentic Hive Mind: Orchestrating the Future of AI Development

The Problem: The Coordination Gap

While getting an LLM to write a single function is now common, coordinating eighteen agents to build a complex system remains a significant challenge. Without a framework, agents often redo work, lose context, or ossify bugs into test suites because they lack a shared standard.

The Solution: A Portable Orchestration Framework

The solution is an orchestration framework. But every tool has one because having a framework makes the tool sticky. Make the framework non-interoperable and tuned to the foibles of your chosen LLM and suddenly the friction to change is too hard to overcome.

Mind Rocket’s framework is not an application architecture, but a coordination engine. It defines an operating model (who does work, how they do it, and how it’s verified) as versioned code. This makes it portable between LLM vendors.

Core Pillars of the Framework

Everything as Code: Specifications, rules, and handoffs are versioned artifacts in the repository to prevent context drift.

Context Durability Over Code Quality: High-quality code is a downstream result of high-quality context; a clear handoff matters more than a model's raw capability.

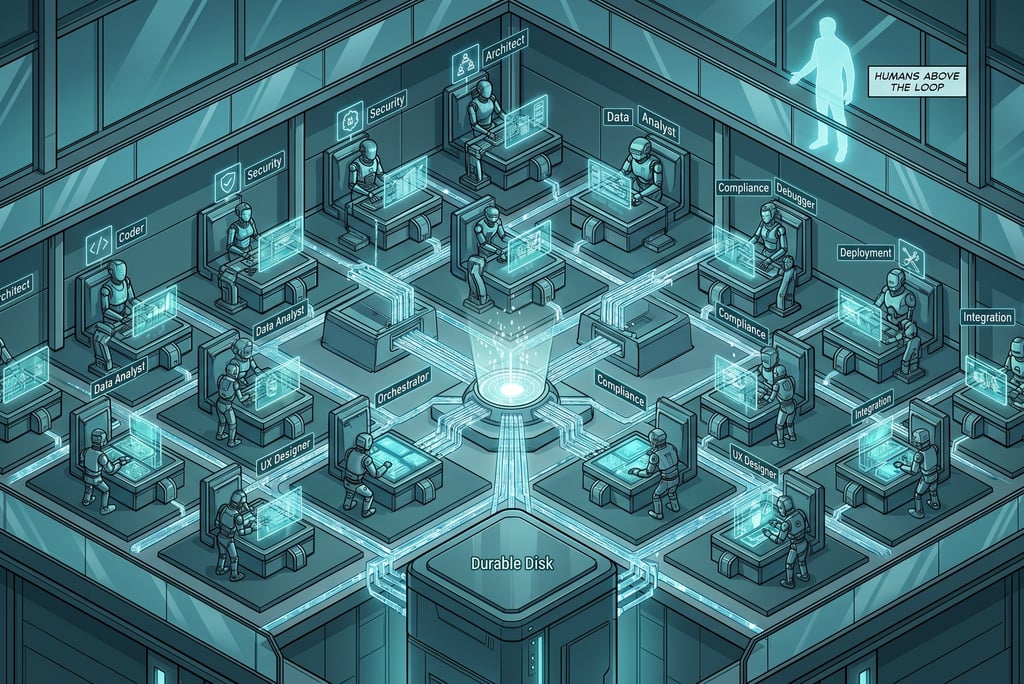

Humans Above the Loop: Human attention shifts from reviewing every line of code to designing the verification systems and gates that govern the agents.

Durable State on Disk: To combat "systemised forgetfulness" (context compaction), all orchestration state lives on disk rather than in ephemeral chat histories.

The Layered Operating Model

The framework is organized into a hierarchy to ensure no logic "bleeds" across boundaries:

Rules: Short, binary constraints (e.g., "use uv run pytest") injected into every prompt.

Agents: 18 specialised roles (e.g., api-designer, security-tester) with bounded responsibilities.

Workflows: YAML-defined sequences like build, prototype, or bugfix that define when work happens.

Standards: Long-form engineering rationales used as reference material on demand.

Key Performance Mechanisms

The Hive Model: Unlike a chaotic "swarm," the framework uses a hive model where specialist agents work within bounded scopes under centralised orchestration.

Verification over Assertion: "All tests pass" is not accepted as evidence; the system requires command output (stdout) and green results from pre-commit validators to proceed.

Portability: While optimised for tools like Claude Code, the framework is designed to be tool-agnostic, projecting authoritative YAML/Markdown sources into various runtime formats like CLAUDE.md or AGENTS.md.

Conclusion: Frameworks signal maturity

This framework represents a shift from agents as simple assistants to agents as a disciplined, automated workforce. By moving away from "conversational" ephemeral state and toward durable context written to disk, Mind Rocket has built a system where AI doesn't just generate code, but follows a governed, versioned operating model.

The transition is marked by three critical shifts in how we build:

From Inference to Orchestration: We no longer rely on a model to "guess" the next step; instead, we use explicit YAML workflows to define the exact sequence of red-green-refactor cycles and quality gates.

From Generalists to Specialists: The "Hive Mind" replaces the dilution of large prompts with 18 specialized agents, each constrained by narrow file scopes and a curated set of rules.

From Reviewing to Governing: Human expertise is elevated "above the loop," shifting from the manual fatigue of line-by-line code review to the strategic design of the verification systems and validators that keep the hive in check.

Ultimately, this approach solves the "coordination problem" by acknowledging that while models might forget, the framework never does.

© Mind Rocket Services Ltd 2026. All rights reserved.

Avi Sinharay

CEng, MIET, MEng, MA (Cantab.)

Fractional CTO, Director/VP of Technology

Core Expertise

Technology Leadership, AI Native Dev, Operating Model Design, Engineering Culture

Domains

Health Tech, Media Tech